Source – zdnet.com

At the core of any DevOps initiative is the judicious employment of containers and microservices, which dramatically speed up and simplifying the jobs of developers and operations teams alike. While many of the tried-and-true rules of IT management apply, containers and microservices also add new considerations, and new ways of doing things.

To explore many of the IT management concerns that accompany successful container and microservice deployments, we turn to the observations of two seasoned experts in the field, Ashesh Badani, VP and general manager of OpenShift for Red Hat and Marc Wilczek, a highly regarded industry thought leader.

Badani, writing at The Enterprisers Project, observes that the ultimate goal of containers and microservices — and the DevOps they enable — is agility. “Containers corral applications in a neat package, isolated from the host system on which they run. Developers can easily move them around during experimentation, which is a fundamental part of DevOps. Containers also prove helpful as you move quickly from development to production environments.”

Developers are especially enthusiastic about microservices enabled by containers for a couple of reasons, Wilczek explains in a recent post in CIO. “They enable developers to isolate functions easily, which saves time and effort, and increases overall productivity. Unlike monoliths, where even the tiniest change involves building and deploying the whole application, each microservice deals with just one concern.”

As with any promising new technology or methodology, there are barriers to overcome, both organizational and technological. Badini and Wilczek offer sage advice on overcoming the major speed bumps that may flummox the move to a microservices and containerized architecture:

Organizational skills and readiness: Before any container of microservices effort can get underway, people across the enterprise need to be on board with it, and ready to adapt their own mindsets. “IT leaders driving cultural change need support from both the C-suite and evangelists in the smaller teams,” says Badini, warning that all too often, “the easiest thing to do is just do nothing.” But today’s hyper-competitive and hyper-fast economic environment demands the speed and agility containers and microservices make possible. The good news, Badani adds, is “you don’t need all the resources or skills of Facebook in order to make significant business change. Start experiments with smaller groups. As you succeed and become more comfortable, expand out in terms of technology and talent. Encourage people on your team to engage with their peers outside the company, to talk about technology and culture challenges.”

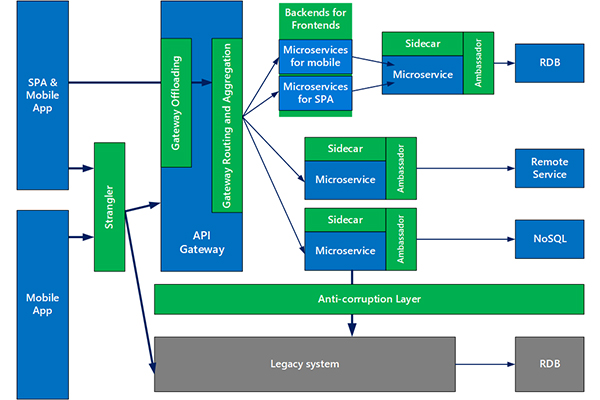

Platform. Choice of platform is key to a container and microservices efforts. “A platform addresses management, governance, and security concerns,” says Badani. “While there are plenty of open source container tools to experiment with, an enterprise-grade container platform typically comprises dozens of open source projects, including Kubernetes orchestration, security, networking, management, build automation and continuous integration and deployment capabilities out of the box.”

Capacity and lifecycle management: “Both containers and microservices can easily be replaced and therefore tend to have a relatively short lifespan” — often measured in days, says Wilczek. “The short lifespan combined with the enormous density lead to an unprecedented number of items that require monitoring.” In addition, containers need a lot of memory space. The challenge is that “with their own operating environment attached, images can easily reach a couple of hundred megabytes in size,” Wilczek says. He recommends ongoing lifecycle management practices — “especially retiring old images to free up shared resources and avoid capacity constraints.” An ability to quickly retire containers to free up memory space requires a comprehensive lifecycle management effort.

Network layer: Wilczek cautions that networks — or even virtualized network layers — may prove to be bottlenecks in the performance of microservices and containerized architectures, and thus require “close monitoring in terms of performance, load balancing, and seamless interaction.”

Balancing legacy and cloud-native apps: Trade-offs between existing infrastructure and new, cloud-borne applications may be a sticking point, but is normal, Badani explains. “Some CIOs still have COBOL apps to support. Grappling with both old and new technologies, and making tradeoffs, is normal. Some companies seek containers mostly to house cloud-native apps being created by application development teams, including new work and revamps of existing apps. These apps are often microservices-based. The goal is to break up an app into its underlying services, so teams can update the apps independently.”

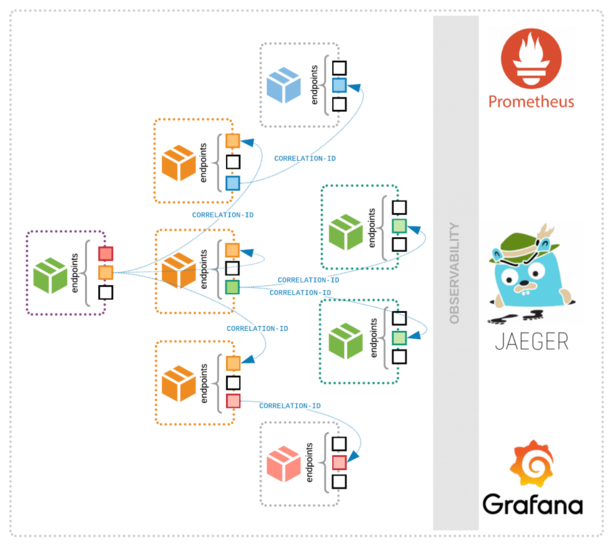

Monitoring: “Many traditional IT monitoring tools don’t provide visibility into the containers that make up those microservices, leading to a gap somewhere between hosts and applications that is ultimately off the radar,” Wilczek warns. “Organizations need to put one common monitoring in place comprising both worlds and covering the entire IT stack – from the bottom to the top.”

Manageability. Organizations need to ensure that there are enough staff resources dedicated to container and microservice deployment and management. “All too often, developers are tempted to add new functionality by creating yet another microservice,” Wilczek says. “In no time, organizations find themselves attempting to manage an army of containers and countless microservices competing for the same IT infrastructure underneath.” He recommends employing “analytics tools that discover duplicative services, and detect patterns in container behavior and consumption to prioritize access to systems resources.”

Security. “Because containers contain system specific libraries and dependencies, they’re more prone to be affected by newly discovered security vulnerabilities,” Badani says, who recommends the use of “trusted registries, image scanning, and management tools” that can help automatically identify and patch container images.