Source: chess.com

Making an appearance in Lex Fridman’s Artificial Intelligence Podcast, DeepMind’s David Silver gave lots of insights into the history of AlphaGo and AlphaZero and deep reinforcement learning in general.

Today, the finals of the Chess.com Computer Chess Championship (CCC) start between Stockfish and Lc0 (Leela Chess Zero). It’s a clash between a conventional chess engine that implements an advanced alpha–beta search (Stockfish) and a neural-network based engine (Lc0).

One could say that Leela Chess Zero is the open-source version of DeepMind’s AlphaZero, which controversially crushed Stockfish in a 100-game match (and then repeated the feat).

Even a few years on, the basic concept behind engines like AlphaZero and Leela Zero is breathtaking: learning to play chess just by reinforcement learning from repeated self-play. This idea, and its meaning for the wider world, was discussed in episode 86 of Lex Fridman’s Artificial Intelligence Podcast, where Fridman had DeepMind’s David Silver as a guest.

Silver leads the reinforcement learning research group at DeepMind and was lead researcher on AlphaGo and AlphaZero, and he was the co-lead on AlphaStar and MuZero. He did a lot of important work in reinforcement learning, defined as how agents ought to take actions in an environment in order to maximize the notion of cumulative reward.

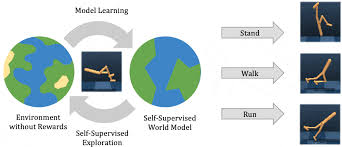

Silver explains: “The goal is clear: The agent has to take actions, those actions have some effect on the environment, and the environment gives back an observation to the agent saying: This is what you see or sense. One special thing it gives back is called the reward signal: how well it’s doing in the environment. The reinforcement learning problem is to simply take actions over time so as to maximize that reward signal.”

The first part of the podcast is mostly about the board game go and DeepMind’s successful quest in building a system that can beat the best players in the world—something that had been achieved in many other board games much earlier, including chess. The story was also depicted in a motion picture.

While AlphaGo was still using human knowledge to some extent (in the form of patterns from games played by humans), the next step for DeepMind was to create a system that wasn’t fed by such knowledge. Moving from go to chess, so from AlphaGo to AlphaZero, was an example of taking out initial knowledge and wanting to know how far you could go with self-play alone. The ultimate goal is to use algorithms in other systems and solve problems in the real world.

The first new version that was developed was a fully self-learning version of AlphaGo, without prior knowledge and with the same algorithm. It beat the original AlphaGo 100-0.

It was then applied in chess (AlphaZero) and Japanese chess (shogi), and in both cases, it beat the best engines in the world.

“It worked out of the box. There’s something beautiful about that principle. You can take an algorithm, and not twiddle anything, it just works,” said Silver.

In one of the most interesting parts of the podcast, Silver suggests that the (already incredibly strong) AlphaZero that crushed Stockfish can be even stronger and potentially crush its current version. To be fair, he starts by calling this a falsifiable hypothesis:

“If someone in the future was to take AlphaZero as an algorithm and run it with greater computational resources than we have available today, then I will predict that they would be able to beat the previous system 100-0. If they were then to do the same thing a couple of years later, that system would beat the previous system 100-0. That process would continue indefinitely throughout at least my human lifetime.”

Earlier in the podcasts, Silver explained this mind-boggling idea of AlphaZero losing to a future generation that can benefit from bigger computer power and learn from itself even more:

“Whenever you have errors in a system, how can you remove all of these errors? The only way to address them in any complex system is to give the system the ability to correct its own errors. It must be able to correct them; it must be able to learn for itself when it’s doing something wrong and correct for it. And so it seems to me that the way to correct delusions was indeed to have more iterations of reinforcement learning. (…)

“Now if you take that same idea and trace it back all the way to the beginning, it should be able to take you from no knowledge, from a completely random starting point, all the way to the highest levels of knowledge that you can achieve in a domain.”

There is already a new step for AlphaZero, which called MuZero. In this version, the algorithm, combined with tree-search, works without even learning the rules of a particular game. Perhaps unsurprisingly, it’s performing superhumanly as well.

Why skip the step of feeding the rules? Because eventually DeepMind is working towards systems that can have meaning in the real world. And, as Silver notes, for that, we need to acknowledge that “The world is a really messy place, and no one gives us the rules.”