Source: bestgamingpro.com

In a study printed on the preprint server Arxiv.org, DeepMind researchers describe a reinforcement learning algorithm-generating approach that discovers what to foretell and the way to be taught it by interacting with environments. They declare the generated algorithms carry out nicely on a variety of difficult Atari video video games, reaching “non-trivial” efficiency indicative of the approach’s generalizability.

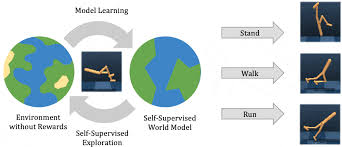

Reinforcement studying algorithms — algorithms that allow software program brokers to be taught in environments by trial and error utilizing suggestions — replace an agent’s parameters in response to one in all a number of guidelines. These guidelines are often found via years of analysis, and automating their discovery from knowledge might result in extra environment friendly algorithms, or algorithms higher tailored to particular environments.

DeepMind’s answer is a meta-learning framework that collectively discovers what a specific agent ought to predict and the way to use the predictions for coverage enchancment. (In reinforcement studying, a “coverage” defines the educational agent’s approach of behaving at a given time.) Their structure — discovered coverage gradient (LGP) — permits the replace rule (that’s, the meta-learner) to resolve what the agent’s outputs must be predicting whereas the framework discovers guidelines through a number of studying brokers, every of which interacts with a special surroundings.

In experiments, the researchers evaluated the LPG immediately on advanced Atari video games together with Tutankham, Breakout, and Yars’ Revenge. They discovered that it generalized to the video games “moderately nicely” in comparison with present algorithms, regardless of the very fact the coaching environments consisted of environments with fundamental duties a lot easier than Atari video games. Furthermore, the brokers educated with the LPG managed to realize “superhuman” efficiency on 14 video games with out counting on hand-designed reinforcement studying parts.

The coauthors famous that LPG nonetheless lags behind some superior reinforcement studying algorithms. However in the course of the experiments, its generalization efficiency improved rapidly because the variety of coaching environments grew, suggesting it is perhaps possible to find a general-purpose reinforcement studying algorithm as soon as a bigger set of environments can be found for meta-training.

“The proposed strategy has the potential to dramatically speed up the method of discovering new reinforcement studying algorithms by automating the method of discovery in a data-driven approach. If the proposed analysis route succeeds, this might shift the analysis paradigm from manually growing reinforcement studying algorithms to constructing a correct set of environments in order that the ensuing algorithm is environment friendly,” the researchers wrote. “Moreover, the proposed strategy might also function a instrument to help reinforcement studying researchers in growing and enhancing their hand-designed algorithms. On this case, the proposed strategy can be utilized to supply insights about what a very good replace rule seems to be like relying on the structure that researchers present as enter, which might velocity up the guide discovery of reinforcement studying algorithms.”