Source: analyticsindiamag.com

Reinforcement learning is one of the most happening domains within AI since the early days. The innovations are often ingenious, but we rarely see them in the real world. Robotics is one area where reinforcement learning is widely used, where robots usually learn novel behaviours through trial and error interactions.

However, there are challenges posed by the fundamental design of state-of-the-art reinforcement learning algorithms, which comes to light in unstructured settings like indoors of a house, where the robotic systems need to be diverse to adapt to the real world.

Simulation is another alternative to teach robots to behave in a certain way. However, this technique comes with many limitations as well. One such problem is again the adaptiveness of the systems. The researchers at Berkeley’s AI department said that improvements in simulation performance may not translate to improvements in the real world. Additionally, if a new simulation needs to be created for every new task and environment, content creation can become prohibitively expensive.

Therefore, they suggest concentrating more on reinforcement learning models and its challenges. Exploring the same, they conducted a few experiments and came up with certain recommendations that can push the boundaries of real-world reinforcement learning.

Dealing With The Challenges

Reinforcement learning systems rely on the framework of a Markov decision process (MDPs) and idealised MDP, say the researchers, are not easily available to the learning algorithm in a real-world environment.

To begin with, they list down three main challenges that one encounters with RL systems in practical and scalable environments:

- the absence of reset mechanisms,

- state estimation and

- reward specification

- Explaining about the disadvantages of assuming a reset mechanism, the researchers say that in real world environments reset mechanism does not usually exist, and since RL algorithms almost always assume access to an episodic reset mechanism, this can be impractical. So, what do we do?

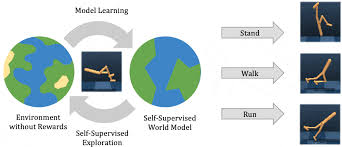

Recommendation: The team at Berkeley suggests a perturbation to the existing state of the agent so that it never stays in the same state for too long. To do this, they propose a perturbation controller, which is trained with the goal of taking the agent to less explored states of the world.

- Next comes the challenge of feature engineering. The real-world environments might not always be equipped with sensors and other data collecting entities, which is usually how RL systems flourish. Absence of motion capture or vision-based tracking systems is a problem. So what do we do?

Recommendation: Using unsupervised representation learning techniques, the team treated images into their fundamental forms — latent features. These latent features contain key information and make learning easier. For this work, they experimented with a variational encoder.

- The most important mechanism of any RL system pivots around its reward functions. Rewards are diligently designed mathematical nudges that push a system or a machine towards a certain goal. However, in the real world, one can’t expect external inputs, which a reward function heavily relies on. So what do we do?

Recommendation: The researchers propose a self-assign reward mechanism where a human operator provides images that depict successful outcomes, a means to specify the desired task. These images are used to make a classifier that learns well and the likelihood of this classifier is used to self-assign reward through the learning process.