Source: venturebeat.com

One way to test machine learning models for robustness is with what’s called a trojan attack, which involves modifying a model to respond to input triggers that cause it to infer an incorrect response. In an attempt to make these tests more repeatable and scalable, researchers at Johns Hopkins University developed a framework dubbed TrojAI, a set of tools that generate triggered data sets and associated models with trojans. They say that it’ll enable researchers to understand the effects of various data set configurations on the generated “trojaned” models, and that it’ll help to comprehensively test new trojan detection methods to harden models.

It’s critical that the AI models enterprises use to make critical decisions are protected against attacks, and this method could help them become more secure.

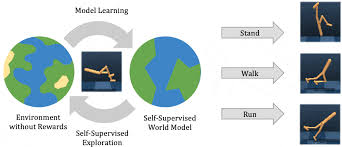

TrojAI is a set of Python modules that enable researchers to find and generate trojaned AI classification and reinforcement learning models. In the first step — classification — the user configures (1) the type of data poisoning to apply to the dataset of interest, (2) the architecture of the model to be trained, (3) the training parameters of the model, and (4) the number of models to train. The configuration is then ingested by the main program, which generates the desired models. Alternatively, instead of a data set, the user can configure a poisonable environment on which the model will be trained.

A data generation sub-module — datagen — creates a synthetic corpus containing image or text samples while the model generation sub-module — modelgen — trains a set of models that contain a trojan.

TrojAI collects several metrics when training models on the trojaned data sets or environments, including the performance of the trained model on data for all examples in the test data set that don’t have a trigger; the performance of the trained model for examples that have the embedded trigger; and the performance of the model on clean examples of the classes that were triggered during model training. High performance on all three metrics is intended to provide confidence that the model has been successfully trojaned while maintaining high performance on the original data set for which the model was designed.

In the future, the researchers hope to extend the framework to incorporate additional data modalities such as audio as well as tasks like object detection. They also plan to expand on the library of data sets, architectures, and triggered reinforcement learning environments for testing and production of multiple triggered models, and to account for recent advances in trigger embedding methodologies that are designed to evade detection.

The Johns Hopkins team is far from the only one tackling the challenge of adversarial attacks in machine learning. In February, Google researchers released a paper describing a framework that either detects attacks or pressures the attackers to produce images that resemble the target class of images. Baidu offers a toolbox — Advbox — for generating adversarial examples that’s able to fool models in frameworks like MxNet, Keras, Facebook’s PyTorch and Caffe2, Google’s TensorFlow, and Baidu’s own PaddlePaddle. And MIT’s Computer Science and Artificial Intelligence Laboratory recently released a tool called TextFooler that generates adversarial text to strengthen natural language models.