Source: allaboutcircuits.com

Many of us are familiar with the concept of machine learning as it pertains to neural networks. But what about TinyML?

Surging Interest in TinyML

TinyML refers to the machine learning technologies on the tiniest of microprocessors using the least amount of power (usually in mW range and lower) while aiming for maximized results.

With the proliferation of IoT devices, big names like Renesas and Arm have taken a vested interest in TinyML—for instance, with Arm’s recent expansion of its AI portfolio with new machine learning and neural processing IP and Renesas’ release of its TinyML platform, Qeexo AutoML, which does not require code nor expertise in ML.

Other companies have zeroed in on partnerships that will help them exaggerate the utility of TinyML. Eta Compute and Edge Impulse recently announced their partnership in which they’ll combine the strengths of Eta Compute’s neural sensor processor, the ECM3532, with Edge Impulse’s tinyML platform. With an eye on battery capacity—a difficult point to work around in TinyML—this partnership hopes to accelerate the time-to-market of machine learning in billions of low-powered IoT products.

Another way we can assess the progress of TinyML is to reflect on the tinyML Summit, which took place earlier this year. Several of the presentations at the conference illustrate the key concepts of machine learning at the smallest level.

Reflections on the tinyML Summit

In February, AAC contributor Luke James forecasted the high aims for the 2020 tinyML Summit, which would, as in years past, spotlight developments in TinyML. The summit published presentations online and explored a number of categories pertaining to TinyML: hardware (dedicated integrated circuits), systems, algorithms and software, and applications.

Here are a few noteworthy presentations as they relate to design engineers.

Model Compression

Two of the presenters at the conference brought the realities of tinyML into focus by discussing a device we all have: mobile phones. In their discussion of “model compression,” MIT researcher Yujun Lin explained that typical machine learning devices, such as cell phones, have approximately 8 GB of RAM while microcontrollers have approximately 100 KB to 1 MB of RAM. Because microcontrollers have weight and activation constraints, they necessitate model compression.

The concept is to shrink the pre-trained large models into smaller ones without losing accuracy. This can be achieved in processes like pruning and deep compression. Pruning parses out synapses and neurons, resulting in ten times fewer connections. Deep compression takes pruning a step further with quantization (fewer bits per weight) and a technique known as “Huffman Encoding.”

The researchers suggested that by combining a concept known as neural-hardware architecture search with non-expert usage into the neural network, we can improve AI-geared hardware. The VP and lab director of Samsung’s Advanced Institute of Technology, Changkyu Choi, went into further detail on deep model compression, but his focus was on acceleration toward on-sensor AI.

Deep Reinforcement Learning

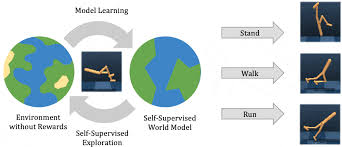

Another expert, Hoi-Jun Yoo, the ICT endowed chair professor from the engineering school at KAIST (Korea Advanced Institute of Science and Technology) spoke about the importance of deep reinforcement learning (DRL) accelerators within the deep neural network (DNN).

In his discussion, he points out that “software and hardware co-optimization for DNN training is necessary for low-power and high-speed accelerators in the same way it brought a dramatic increase in the performance of DNN inference accelerators.”

Yoo also explains that DRL is an essential factor in TinyML because it enables continuous decision-making in a low-power, “unknown environment,” or an environment in which labeled data is difficult to capture.

DNNs for Always-on AI for Battery-Powered Devices

Another company, Syntiant, showcased one of their devices, the NDP100 neural decision processor (NDP), to discuss a broader concept: the value of deep learning over algorithmic genius. Dr. Stephen Bailey, CTO of Syntiant, explained that the magic of the company’s NDP, an always-on and “listening” device, is its deep neural networks (DNN)—continuing Yoo’s discussion on DNNs.

The Syntiant NDP feeds acoustic features to a large DNN (no need for cascading or energy gating) and trains the DNN with large data sets and wide-ranging augmentation. Beyond its noise immunity, the NDP100 is extremely small in size (1.4 mm x 1.8 mm) and consumes less than 140 μW.

Since the summit, Syntiant has also released the NDP101, which is said to couple computation power and memory to exploit “the vast inherent parallelism of deep learning and computing at only required numerical precision.” Syntiant says that these features improve efficiency by 100 times compared to the stored program architectures you’d see in CPUs and DSPs.

Smaller Devices Call for Compressed Machine Learning

The hardware requirements for machine learning in larger systems are similar for TinyML in small IoT. But sometimes, the stakes are higher because of the device’s small size: accuracy, latency, and power consumption. As smaller IoT devices hit the market, engineers may increasingly dabble in TinyML, familiarizing themselves with concepts like deep neural networks, model compression, and deep reinforcement learning.