Source – https://towardsdatascience.com/

Estimate distance and position of solid objects using multiple low-cost ultrasound sensors.

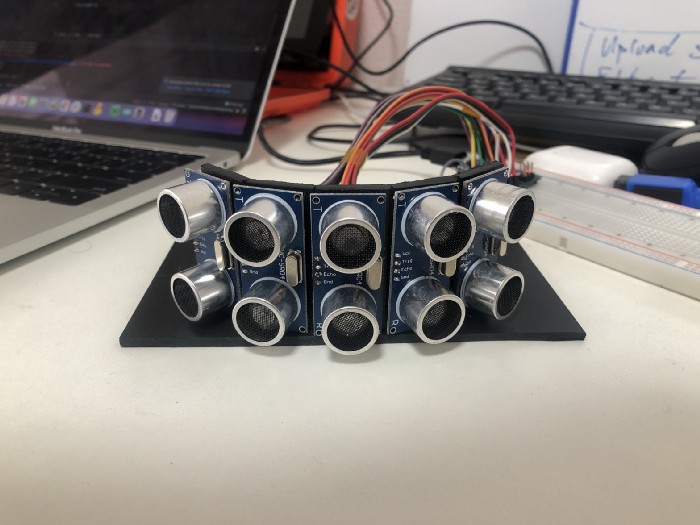

In this article we are going to build from scratch a sonar array based on the cheap and popular HC-SR04 sensor. We will use an Arduino microcontroller to drive and read the sensors and to communicate to a host computer using serial communication. Here is the working code for the full project however I recommend you to follow the steps in the article to understand how it works and to customize it for your needs.

The HC-SR04 is a very popular ultrasound sensor usually used in hobby electronics to build cheap distance sensors for obstacle avoidance or object detection. It has an ultrasound transmitter and receptor used to measure the time-of-flight of an ultrasonic wave signal bouncing against a solid object.

If the speed of sound is roughly 343 m/s at a room temperature of 20 celsius degrees. The distance to an object would the half of the time it takes the ultrasound wave from the transmitter to the receptor:

distance = 343 / ( time/2 )

However the HC-SR04 sensor is very inaccurate and will give you a very rough and noisy distance estimate. There are environmental factors like temperature and humidity that will affect the ultrasonic wave speed and the solid object material and angle of incidence will as well deteriorate the distance estimation. There are ways to improve the raw readings as we will learn later but in general terms ultrasound sensors should be used only as the last resort to avoid a close collision or to detect a solid object with a low distance resolution. But they are not good navigational or distance estimation sensors. For that we could use more expensive sensors as LiDar or a laser rangefinder.

I want to use this sonar array to detect nearby obstacles in front of my Raspberry Pi robot Rover4Wd (this project will be covered in another article). The sensor’s effective angle of detection is around 15 degrees so in order to cover a bigger area in front of the robot I want to use 5 sensors in total using an arc shape:

The benefit of this setup is that we can not only estimate the distance of the obstacle in front of the robot but also the position (roughly) of the object relative to the robot.

The HC-SR04 sensor has only four pins. Two for ground and +5v, and Echo and Trigger pins. To use the sensor we need to trigger the signal using the Trigger pin and measure the time until is received via the Echo pin. As we don’t use the Echo and Trigger pins at the same time they can share the same cable to connect to an Arduino digital pin.

For this project we are going to use an Arduino Nano which is small and broadly available. There are tons of non-official compatible clones for under $3 per unit as well.

For this breadboard setup we have connected both Trig and Echo pins to a single digital pin in Arduino. We are going to use D12, D11, D10, D9 and D8 pins for sending and receiving signals. This hardware setup is only limited by the microcontroller’s available digital pins but it can be expanded further using multiplexing where one pin can be shared by multiple sensors but only one sensor at the time.

Traditionally this will be the sequential workflow we would need to manage to poll sensors one by one:

- Trigger one sensor

- Receive the echo

- Calculate distance using the duration of the previous steps

- Communicate measurement using the serial port

- Process next sensor

However we are going to use a ready available Arduino library called NewPing that allows you to ping multiple sensors minimizing the delay between sensors. This will help us to measure the distance from all 5 sensors several times per second at the same time (almost). The resulting workflow would look like this:

- Trigger and echo all sensors async (but sequentially)

- When a sensor is done calculate distance

- When all sensors are done for the current cycle, communicate readings from all sensors using the serial port

- Start a new sensor reading cycle

The implementation is very straightforward and heavily commented in the code. Feel free to take a look at the full code.

I want to put special focus on the serial communication part when all the sensors are done with the distance measurement:

Originally I wanted to send sensor readings via serial using strings however I realized the message will be big and harder to parse on the host side of the project. In order to improve speed and lower delay in the readings I decided to switch to a simple format using a message of 5 bytes:

Byte 1: Character ‘Y’

Byte 2: Character ‘Y’

Byte 3: Sensor index [0–255]

Byte 4: High-order byte of the measured distance (as an unsigned integer)

Byte 5: Low-order byte of the measured distance (as an unsigned integer)

Byte 1 and 2 will be just the message header to decide where a new message starts when we will be reading the incoming serial bytes. This approach is very similar to what the TF-Luna LiDar sensor is doing to communicate to the host computer.

In the host side we will use Python 3 to connect to the Arduino micro-controller via serial port and read the incoming bytes as fast as we can. The ideal setup will be to use a UART port in the host computer but just serial USB will do the job. The full code of the Python script is here.

There are several interesting things to note, first we need to read the serial on a different thread so we won’t miss any incoming messages while we are processing the sensor reading or doing different stuff:

Secondly, we need to find the start of the message with the header ‘YY’ to start reading the sensors. As the Arduino controller doesn’t wait a host to connect to the serial port we may connect and read a partial message that will be discarded. It may take and additional second or two to get in sync with the micro-controller messages.

Thirdly, we are smoothing the measurements with a simple moving average to avoid some noise. In this case we are just using a window of two measurements because we need the distance to be updated very fast to avoid hitting a close obstacle with the robot Rover4WD. But you can adjust it to a bigger window depending on you requirements. Bigger window cleaner but slow changing, smaller window noisier but fast changing.

What are the next steps? This project is ready to be integrated in a robotic/electronic project. In my case I’m using a Ubuntu 20.10 with ROS2 in a Raspberry Pi 4 to control my robot Rover4WD. The next step for me would be to build a ROS package to process the measurements into detected obstacles and to publish transform messages that will be incorporated into the bigger navigation framework using sensor fusion.

As always let me know if you have any question or comment to improve the quality of this article. Thank you and keep enjoying your projects!