Source: venturebeat.com

In reinforcement learning, the goal generally is to spur an AI-driven agent to complete tasks via systems of rewards. This is achieved either by learning a mapping (a policy) from states to actions that maximize an expected return (policy gradients), or by inferring such a mapping by calculating the expected return for a given state-action pair.

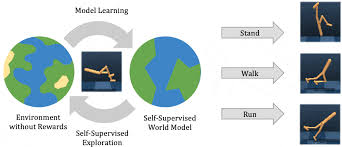

Model-based reinforcement learning (MBRL) aims to improve this by learning a model of the dynamics from an agent’s interactions with the environment that can be leveraged across many different tasks (aka transferability) and used for planning. To this end, researchers at Google, the University of Oxford, and UC Berkeley developed an approach — Ready Policy One (a not-so-subtle nod to Ernest Cline’s hit novel Ready Player One) — to acquiring data for training world models through exploration that jointly optimizes policies for both reward and model uncertainty reduction. The end result is that the policies leveraged for data collection also perform well in the true environment and can be tapped for evaluation.

Ready Policy One takes an active learning approach rather than focusing on optimization. In other words, it seeks to directly learn the best model rather than learning the best policy. A tailored framework allows Ready Policy One to adapt the level of exploration to improve the model in the fewest number of samples, and a mechanism stops gathering new samples in any given collection phase when the incoming data resembles what’s already been acquired.

In a series of experiments, the researchers evaluated whether their active learning approach for MBRL was more sample-efficient than existing approaches. In particular, they tested it on a range of continuous control tasks from research firm OpenAI’s Gym environment, and they found that Ready Policy One could lead to “state-of-the-art” efficiency when combined with the latest model architectures.

“We are particularly excited by the many future directions from this work,” wrote the study’s coauthors. “Most obviously, since our method is orthogonal to other recent advances in MBRL, [Ready Policy One] could be combined with state of the art probabilistic architectures … In addition, we could take a hierarchical approach by ensuring our exploration policies maintain core behaviors but maximize entropy in some distant unexplored region. This would require behavioral representations, and some notion of distance in behavioral space, and may lead to increased sample efficiency as we could better target specific state-action pairs.”