Source: venturebeat.com

DeepMind this week released Acme, a framework intended to simplify the development of reinforcement learning algorithms by enabling AI-driven agents to run at various scales of execution. According to the engineers and researchers behind Acme, who coauthored a technical paper on the work, it can be used to create agents with greater parallelization than in previous approaches.

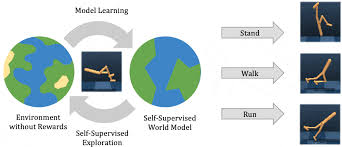

Reinforcement learning involves agents that interact with an environment to generate their own training data, and it’s led to breakthroughs in fields from video games and robotics to self-driving robo-taxis. Recent advances are partly attributable to increases in the amount of training data used, which has motivated the design of systems where agents interact with instances of an environment to quickly accumulate experience. This scaling from single-process prototypes of algorithms to distributed systems often requires a reimplementation of the agents in question, DeepMind asserts, which is where the Acme framework comes in.

Acme is a development suite for training reinforcement learning agents that attempts to address the issues of both complexity and scale, with components for constructing agents at various levels of abstraction from algorithms and policies to learners. The thinking goes that this will allow for the swift iteration of ideas and the evaluation of those ideas in production, chiefly through training loops, obsessive logging, and checkpointing.

Within Acme, actors interact closely with an environment, making observations produced by the environment and taking actions that in turn feed into the environment. After observing the ensuing transition, the actors are given an opportunity to update their states; this most often relates to their action-selection policies, which determine which actions they take in response to the environment. A special type of Acme actor comprises both acting and learning components — they’re referred to as “agents” — and their state updates are triggered by some number of steps within the learner component. That said, agents for the most part defer their action selection to their own acting component.

Acme provides a data set module that sits between the actor and learner components, backed by a low-level storage system called Reverb that DeepMind also released this week. In addition, the framework establishes a common interface for insertion into Reverb, enabling different styles of preprocessing and the ongoing aggregation of observational data.

Acting, learning, and storage components are split among different threads or processes within Acme, which confers two benefits: environment interactions occur asynchronously with the learning process, and data generation accelerates. Elsewhere, Acme’s rate limitation allows the enforcement of a desired rate from learning to acting, allowing processes to run unblocked so long as they remain within some defined tolerance. For instance, if one of the processes starts lagging behind the other due to network issues or insufficient resources, the rate limiter will block the laggard while the other catches up.

In addition to these tools and resources, Acme ships with a set of example agents meant to serve as reference implementations of their respective reinforcement learning algorithms as well as strong research baselines. More might become available in the future, DeepMind says. “By providing these … we hope that Acme will help improve the status of reproducibility in [reinforcement learning], and empower the academic research community with simple building blocks to create new agents,” wrote the researchers. “Additionally, our baselines should provide additional yardsticks to measure progress in the field.”