Source: cordis.europa.eu

Designing algorithms for more challenging data

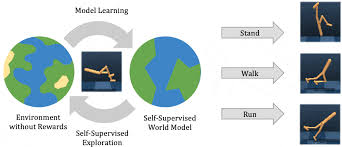

Machine Learning researchers often have to overcome the ‘sim-to-real’ transfer, where algorithmic feats accomplished in computer simulations can be repeated in test performances. DESIRE has produced a data-driven, robust decision-making algorithm to achieve just that.

Advancements in computing, such as the game AlphaGo, both rely on and generate large amounts of data. To cater for this volume of data, researchers depend on Machine Learning (ML) algorithms developed from techniques such as Reinforcement Learning (RL), alongside Artificial Intelligence (AI) breakthroughs. However, while these algorithms can be effective within simulations, they often prove disappointing in the real world. Such performance failures matter in high-stakes areas such as robotics where, for reasons of practicality and expense, only a limited number of trials can be undertaken. The EU-supported DESIRE project set out to improve the robustness of the optimisation, learning and control algorithms underlying many innovations striving for autonomous control.

Kernel DRO

One of the key problems in the sim-to-real transfer is an ML phenomenon called ‘distribution shift’. Put simply, this is when a discrepancy appears between the distribution of data in the datasets used for training and those used for testing in the real world. “This is usually because the test datasets prove to be too simplistic in their rendering of real-world conditions,” says research fellow Jia-Jie Zhu who received support from the Marie Skłodowska-Curie Actions programme. “Distribution shift has been one of the major problems plaguing learning and control algorithms and a stumbling block to progress,” adds Zhu, from the Max Planck Institute for Intelligent Systems (the project host). The DESIRE project drew upon so-called kernel-based learning methods to reduce this distribution drift. These are computations which make algorithms more reliable by recognising patterns in data, identifying and then organising relations within the data according to predetermined features such as correlations or classifications. This enabled DESIRE to create an algorithm employing kernel distributionally robust optimisation (Kernel-DRO), in which decisions, such as control commands for robots, were robustly determined.

Broad applicability

While DESIRE’s work is theoretical, besides contributing to the literature of mathematical optimisation, control and ML theory, it has a range of very practical implications. Indeed, a strength of the team’s Kernel-DRO solution is precisely this broad applicability. “Many of today’s learning tasks suffer from data distribution ambiguity. We believe that industry or business practitioners looking to improve robustness in their machine learning can easily apply our algorithm,” explains Zhu. To take the work further, Zhu is now aiming to create larger-scale learning algorithms which can cater for more random data inputs, suitable for industrial applications. For example, the principle of data robustness is being applied to model predictive control, a highly effective control method useful for safety-critical applications such as flight control, chemical process control and robotics.