Source: venturebeat.com

Reinforcement learning, which spurs AI to complete goals using rewards or punishments, is a form of training that’s led to gains in robotics, speech synthesis, and more. Unfortunately, it’s data-intensive, which motivated research teams — one from Google Brain (one of Google’s AI research divisions) and the other from Alphabet’s DeepMind — to prototype more efficient means of executing it. In a pair of preprint papers, the researchers propose Adaptive Behavior Policy Sharing (ABPS), an algorithm that allows the sharing of experience adaptively selected from a pool of AI agents, and a framework — Universal Value Function Approximators (UVFA) — that simultaneously learns directed exploration policies with the same AI, with different trade-offs between exploration and exploitation.

The teams claim ABPS achieves superior performance in several Atari games, reducing variance on top agents by 25%. As for UVFA, it doubles the performance of base agents in “hard exploration” in many of the same games while maintaining a high score across the remaining games; it’s the first algorithm to achieve a high score in Pitfall without human demonstrations or hand-crafted features.

ABPS

As the researchers explain, reinforcement learning faces practical constraints in real-world applications because it’s often expensive and time-consuming to perform, computationally speaking. The tuning of hyperparameters — parameters whose values are set before the learning process begins — are the key to optimizing algorithms in reinforcement learning, but they require data collection through interactions with the environment.

ABPS aims to expedite this by allowing experience sharing from a behavior policy (i.e., a state-action mapping, where a “state” represents the state of the world and an “action” refers to which action should be taken) selected from several agents trained with different hyperparameters. Specifically, it incorporates a reinforcement learning agent that selects an action from a legal set according to a policy, after which it receives a reward and an observation that’s determined by the next state.

Training the aforementioned agent involves generating a set of hyperparameters, where a pool of AI architectures and optimization hyperparameters such as learning rate, decay period, and more are selected. The goal is to find the best set such that the agent trained with that set achieves the best evaluation results, while at the same time improving data efficiency in hyperparameter tuning by training agents simultaneously and selecting only one behavior agent to be deployed as at each step.

The policy of the selected agent is used to sample actions and the transitions are stored in a shared space, which is constantly evaluated to reduce the frequency of policy selection. An ensemble of agents is obtained at the end of training, and from it, one or more top-performing agents are chosen to be deployed for serving. Instead of examining the behavior policy reward collected during training, a separate online evaluation for 50 episodes is run for every agent at each training epoch, so that the online evaluation reward reflects the performance of the agent in the pool.

In an experiment, the team trained an ensemble of four agents, with each using one of the candidate architectures on Pong and Breakout, and an ensemble of eight agents with six using variations of small architectures on Boxing. They report that ABPS methods achieved better performance on all three games and that random policy selection resulted in the same level of performance, even with the same number of environment actions as a single agent.

UVFA

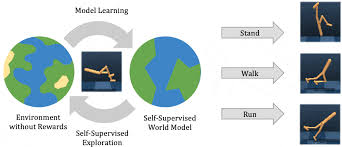

Exploration remains one of the major challenges in reinforcement learning, in part because agents fed weak rewards sometimes fail to learn tasks. UVFA doesn’t solve this outright, but it attempts to address it by jointly learning separate exploration and exploitation policies derived from the same AI, in such a way that the exploitative policy can concentrate on maximizing the extrinsic reward (solving the task at hand) while the exploratory ones keep exploring.

As the researchers explain, UVFA’s learning of exploratory policies serves to build a shared architecture that continues to develop even in the absence of intrinsic, or natural, rewards. Reinforcement learning helps to approximate an optimal function corresponding to several intrinsic rewards, encouraging agents to visit all states in an environment while periodically revisiting familiar (but potentially not fully explored) states over several episodes.

It’s achieved with two modules: an episodic novelty module and an optional life-long novelty module. The episodic novelty module contains episodic memory and an embedding function that maps the current observation to a learned representation, such that at every step, the agent computes an episodic intrinsic reward and appends the state corresponding to the current observation to memory. As for the life-long novelty module, it provides a signal to control the amount of exploration across multiple episodes.

Concretely, the intrinsic reward is fed directly as an input to the agent, and the agent maintains an internal state representation that summarizes its history of all inputs — state, action, and rewards — within an episode. Importantly, the reward doesn’t vanish over time, ensuring that the learned policy is always partially driven by it.

In experiments, the team reports that the proposed agent achieved high scores in all Atari “hard-exploration” games, including Pitfall, while still maintaining a high average score over a suite of benchmark games. By leveraging large amounts of compute over the course of days running on distributed training architectures that collect experience from actors in parallel on separate environments, they say UVFA enables agents to exhibit “remarkable” performance.