Source: rtinsights.com

Netflix, Amazon, and PayPal – these well-recognized global brands fundamentally changed the way we consume entertainment, shop, and manage finances, respectively. However, without major advancements shaping the database industry over the last 40 years, these brands – synonymous with their cloud-based applications – would not exist. To be specific, today’s instant, on-demand services have been made possible, in part, by the single node Relational Database Management System (RDBMS).

As more services are made available online and digital innovations become mainstream, it’s important to step back and understand how the underlying technology originated, how it evolved to meet both business and consumer demands, and how technology must continue to evolve to adequately support the growing demand for these applications (look no further to the advent of more streaming services in the past year alone – Disney+, AppleTV+, and more).

The Internet’s Infancy

During the Internet’s infancy stages, Oracle made RDBMS databases popular – at least popular among business users who were using simple client-server applications to help them manage operations, such as sales and employee data, customer relationships, supply chains, and more. These applications were monoliths and were perfectly suited to rely on the monolithic databases used by businesses at the time and had no real impact on the consumer world, which remained mostly disconnected from the (at the time) little-known World Wide Web.

The Open Source Database Era

The Internet’s arrival for mass-consumption in the mid-1990s heralded the explosion of web applications. This meant that developers now needed faster, more efficient, and cost-effective ways to meet the demand of connected applications. The open-source database era was born and filled the void that the stagnation of monolithic databases had created.

The increasing consumption of open source databases helped make a feature-rich Internet possible. The mass adoption of web applications created a proliferation of data, from transactions, searches, and other activities that were introduced online, and often led to fragmented databases to support that data. However, as more and more features were being introduced that leveraged the underlying database, the existing database capabilities were challenged and unable to meet the demands of these new technologies.

Applications had grown so large and complex that by the early 2000s, when developers needed to store the data in an RDBMS, they had to do a sharded deployment. A sharded deployment is an approach where developers take incoming data, manually partition it into subsets and store each partition in a different RDBMS database instance, while still keeping that data available, in order to scale out. However, sharding a SQL (the universal language of relational databases) database required developers to write more code, which often meant they had to compromise on transactions, referential integrity, joins, and other major SQL functions. Clearly, this approach had run its course, and a new era was needed to manage massive amounts of data without losing SQL’s flexibility for ever-changing applications.

More Services

As the Internet continued to surge, so too did online services – everything from banking, to healthcare, to eCommerce, and more. These services required so much data that manual sharding was nearly impossible to achieve. Because of this, developers began turning to a natively scalable approach called NoSQL – the first class of distributed databases that gave developers the ability to run applications at scale. However, more scalability meant a decrease in consistency, which developers readily accepted because they prioritized keeping pace with the expanding online landscape.

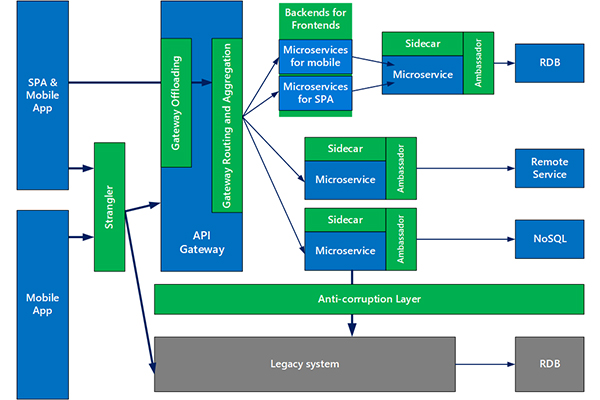

NoSQL today still solves for scale and availability, but it continues to compromise on SQL feature-set and consistency. Additionally, monolithic databases still exist, but the transactional applications originally based on Oracle, SQL Server, or PostgreSQL need to be rewritten to adapt to modern architectures like the public cloud and Kubernetes, an open-source container orchestration system for automating application deployment, scaling, and management (more on this later).

Customer expectations that have come with applications like Netflix, Amazon, and PayPal are now sprawling into business applications – i.e., enterprise applications need to be instantly accessible from anywhere and from any device. These expectations equate to richer experiences that can only be made possible by fully leveraging the cloud, and doing so requires technology to evolve even more.

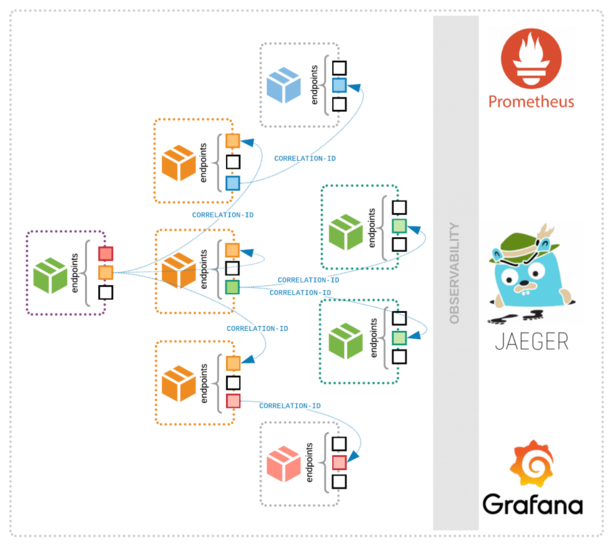

Microservices

The latest technology advancement that has significantly helped developers keep pace with user demand is a microservices-based design. Microservices are independent processes that communicate with each other to accomplish a task within a much larger application. More importantly, microservices help developers deliver new features more quickly and effectively than ever before to keep giving users what they crave – any time and on any device of their own choosing.

But digital transformation and the explosion of applications have created a need for more databases, machines, and data centers. While microservices are helping developers keep pace, new challenges are emerging:

- Rising Database Costs – Licensing and support costs of most database providers rise with the amount of data. This creates a disparity in the value-to-cost ratio, which prompted the open-source model of database transparency. But this model, too, can be limiting as many offerings restrict essential features that most businesses require, and only make those features available in their commercial versions.

- Legacy Databases – On-premises users may have fewer machines to deal with, but legacy databases are expensive, require a lot of human labor, and carry risk. For example, there is a much higher chance of failure if you host an on-premises database in the cloud, a decline in availability and consistency, and a barrier to scale.

- Enterprise Inertia – Significant investments in time and resources are required to move large amounts of existing data to the cloud. However, companies must choose an alternative to their legacy architecture and adopt multi-cloud and multi-region strategies to compete in the modern landscape.

Tomorrow’s Cloud

Many people have worked tirelessly over the past two decades – developers, admins, network engineers, IT, and more – to ensure the internet and all its applications run smoothly for both business and consumer needs. But every technological innovation unearths new challenges that must be addressed. That’s why developers are now turning to multi-cloud deployments and need multi-cloud databases.

Currently, Kubernetes is the most effective approach to taking advantage of multi-cloud environments, which is used to run stateless applications and databases. Using portable containers means that developers can take advantage of the different strengths that exist in different clouds that are managed and operated simultaneously with Kubernetes. This allows users to deploy applications in a cloud-neutral manner, which means leveraging both public and private cloud as needed. Importantly, multi-cloud environments allow developers to create databases that serve the relational data modeling needs of microservices, provide zero data loss and low latency guarantees, and scale on-demand with ease in response to planned and unplanned business events.

The last 40 years of database technology have laid a foundation for the immediate and unlimited access to highly desired business and entertainment applications that we enjoy today. With no end in sight for the Internet and the demand it has created, the next breakthrough in cloud deployment is only a matter of time. To keep pace with the inevitable change, developers need to remember the obstacles they’ve had to overcome in the past to understand how to stay one step ahead of today’s cloud challenges and deliver the next wave of digital innovations that will delight us in years to come.