RobotShop Launches Its Niche Marketplace In Robotics

Source: aithority.com Since its founding in 2003, RobotShop has become the most visited robotics website in the world. Present in several territories including Canada, the United States, Europe, and Read More

MACHINE LEARNING IS POWERING APP DEVELOPMENT

Source: analyticsinsight.net Since the computers were introduced, the primary object of their evolution has been to take vigorous calculations off our plates. It meant easing tasks that Read More

The Antidote for Congested Data and Analytics Pipelines

Source: rtinsights.com Imagine that you’ve got a top-notch finance team, but the only tools they have are pen and paper. Thinking along these lines, imagine your sales Read More

UNESCO completes major progress on establishing foundation of ethics for AI

Source: itbrief.co.nz UNESCOs Member States have announced there has been ‘major progress’ in the development of a global normative instrument for the ethics of artificial intelligence (AI). Read More

The rise of advanced robotics in industrial manufacturing

Source: manufacturingglobal.com Customization and flexibility are two of the hottest words in industrial manufacturing right now. Customers want something made just for them, whether it is a Read More

Dicker Data bolsters IoT play with two new vendors

Source: crn.com.au Dicker Data has added two new companies to its vendor roster to expand its internet of things (IoT) portfolio. The two user and device tracking Read More

10 BEST ONLINE AND FREE DEEP LEARNING COURSES

Source: analyticsinsight.net While deep learning is viewed as a small part of the field of artificial intelligence, it’s now a field that is by all accounts growing Read More

Arcelik selects AWS Cloud for machine learning and analytics

Source: infotechlead.com Amazon Web Services (AWS), an Amazon.com company, announced that Arcelik, a leading manufacturers of household appliances, has selected AWS as its cloud provider for machine Read More

IMPORTANCE OF FINANCIAL GOVERNANCE IN THE CLOUD

Source: analyticsinsight.net Companies are today turning out to be more data-driven as their data is the fuel to their development engine to create new products, outsmart the Read More

8 Trending skills you need to be a good Python Developer

Source: ilounge.com Python, the general-purpose coding language has gained much popularity over the years. Speaking of web development, app designing, scientific computing or machine learning, Python has Read More

Learning From Youth Culture: Generation Z And Technology

Source: inc42.com In the last few years, there has been a dramatic shift, from industry to industry, capturing trends and successfully sustaining culture, as the world unlocked Read More

List of Kubernetes Tools, Defenses Grows

Source: enterpriseai.news Container tools and security fixes for Kubernetes cluster orchestrator continued to be rolled out as the microservices ecosystem evolves. With Kubernetes security concerns growing as Read More

Forget Snowflake, Cloudera Is a Better Big Data Stock

Source: fool.com The initial public offering (IPO) of Snowflake (NYSE:SNOW) grabbed a lot of attention as the cloud-based big data specialist’s S-1 revealed remarkable growth over the last several quarters. In addition, Read More

StradVision R&D Director To Reveal Insights Into Optimizing DNNs At 2020 Embedded Vision Summit

Source: aithority.com StradVision, whose AI-based camera perception software is a leading innovator in Advanced Driver Assistance Systems (ADAS) and Autonomous Vehicles (AVs), will reveal insights into optimizing Deep Read More

Google Meets 100% of Its Electricity Consumption Through Renewable Energy

Source: mercomindia.com Google’s Chief Executive Officer (CEO) Sundar Pichai, in a video message, declared that Google had eliminated its carbon legacy. This brings Google’s lifetime net carbon Read More

Machine learning helps grow artificial organs

Source: myvetcandy.com Researchers from the Moscow Institute of Physics and Technology, Ivannikov Institute for System Programming, and the Harvard Medical School-affiliated Schepens Eye Research Institute have developed Read More

Smart Construction using Artificial Intelligence

Source: geospatialworld.net Artificial intelligence (AI) is a hot topic at the moment, and with good reason. The technology is coming along in leaps and bounds, powered by Read More

Deep Learning Tool Accurately Selects High-Quality Embryos for IVF

Source: healthitanalytics.com A deep learning system was able to choose the most high-quality embryos for in-vitro fertilization (IVF) with 90 percent accuracy, according to a study published in eLife. When Read More

Cong members raise in RS reports of data mining by Chinese firm

Source: outlookindia.com New Delhi, Sep 16 (PTI) Congress members in Rajya Sabha on Wednesday expressed concern over a media report that suggested tracking of over 10,000 prominent Read More

Google and FAO launch new Big Data tool for all

Source: reliefweb.int 16 September 2020, Rome – Anyone anywhere can access multidimensional maps and statistics showing key climate and environmental trends wherever they are, thanks to a new Read More

A lesson on how to test microservices locally

Source: searchapparchitecture.techtarget.com In the early days of microservices, a developer wrote 20 lines of code, and then registered a subdomain to an IP address and port. Ten Read More

Why human-like robots elicit uncanny feelings

Source: nanowerk.com (Nanowerk News) Androids, or robots with humanlike features, are often more appealing to people than those that resemble machines — but only up to a Read More

79% businesses in India feel the need to improve their IoT security approach:Palo Alto Networks

Source: crn.in Medical wearables, kitchen appliances and fitness equipment and other connected devices are regularly connecting to corporate networks, prompting technology leaders to warn that significant action Read More

Why Machine Learning Should Matter to You?

Source: dcvelocity.com In recent years, machine learning has taken the world by storm as more people attempt to automate processes and build their data faster. As a Read More

Top 5 International Programmes In Data Science Available In India

Source: bweducation.businessworld.in Every year, over 4 lakh Indian students go overseas to study, however, the outbreak of the pandemic has led to uncertainty around the feasibility of Read More

AI Is Making Our Lives Better In Weird And Wonderful Ways, Here’s How

Source: gizmodo.com.au When some people hear the term ‘artificial intelligence’ their initial reaction is to imagine a dystopian future where robots have risen up and overthrown humanity. Read More

Three Things To Consider In The Emerging AI And ML Cybersecurity Landscape

Source: aithority.com Cyber threats continue to escalate in both sophistication and volume. Traditional approaches to threat detection, however, are no longer sufficient to ensure protection. Correspondingly, machine Read More

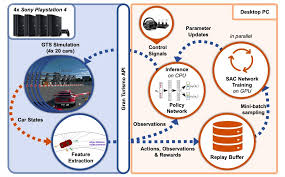

A deep learning model achieves super-human performance at Gran Turismo Sport

Source: techxplore.com Overview of the system devised by the researchers. Credit: Fuchs et al. Over the past few decades, research teams worldwide have developed machine learning and Read More

Oracle open-sources Java machine learning library

Source: infoworld.com Tribuo provides standard machine learning functionality including algorithms for classification, clustering, anomaly detection, and regression. Tribuo also includes pipelines for loading and transforming data and provides Read More

How Big Data Analytics Can Mitigate COVID-19 Health Disparities

Source: healthitanalytics.com September 15, 2020 – While the rapid spread of COVID-19 has exposed many unflattering healthcare truths, the glaring health disparities highlighted by the pandemic are perhaps the Read More