Source: insidebigdata.com

Data science is largely an enigma to the enterprise. Although there’s an array of self-service options to automate its various processes, the actual work performed by data scientists (and how it’s achieved) is still a mystery to your average business user or C-level executive.

Data modeling is the foundation of this discipline that’s responsible for the adaptive, predictive analytics that are so critical to the current data ecosystem. Before data scientists can refine cognitive computing models or build applications with them to solve specific business problems, they must rectify differences in data models to leverage different types of data for a single use case.

Since statistical Artificial Intelligence deployments like machine learning intrinsically require huge data quantities from diverse sources for optimum results, simply getting such heterogeneous data to conform to a homogenous data model has been one of the most time-honored—and time consuming—tasks in data science.

But not anymore.

Contemporary developments in data modeling are responsible for the automation of this crucial aspect of data science. By leveraging a combination of revolving around cloud computing, knowledge graphs, machine learning, and Natural Language Processing (NLP), organizations can automatically map the most variegated data to a common data model to drastically accelerate this aspect of data science—without writing code.

According to Lore IO CEO Digvijay Lamba, “By predefining the model [it’s] possible for AI to run and kind of repeatedly map disparate data into the model. In the end that leads to a significantly lower cost compared to building it in-house with data integration, data unification infrastructure.”

Most of all, leveraging pre-built, common data models drastically decreases the time spent on this data science requisite, helping shift this discipline’s focus towards tuning the models empowering statistical AI, instead of modeling their training datasets.

Cloud Architecture

The cloud is indispensable to rapidly deploying a common data model for the range of schema and data types found in machine learning training data. By effectively renting a common data model via Software-as-a-Service, organizations can avail themselves of serverless computing options to further decrease their data science overhead. Once their data is replicated to a cloud object store (like an S3 bucket, for example), they can specify their requirements for mapping these data to a predefined model that rectifies differences in schema, data structure, format, and other points of distinction.

Lamba mentioned that such horizontal models are characterized by “a lot of rich depth to what the attributes are, the entities are, [and] the relationships are.” Business rules about data requirements for particular use cases are the means by which the underlying system “uses a no-code UI to automatically map the company’s data into the target model,” Lamba said.

Declarative Models and NLP

Natural language technologies (specifically NLP and Natural Language Querying) are an integral component of rapidly mapping differentiated data elements to a common model. These approaches enable users to tailor the unified model according to their own rules to perform what Lamba termed “declarative modeling. The idea here is you describe these rules in your own language; you don’t worry about the data.” Competitive solutions in this space rely on machine learning to iteratively improve mapping that language to the various data elements—arranged in a semantic knowledge graph—pertaining to any of the model’s attributes of choice.

For example, if it’s necessary to understand whether or not patients’ received prescriptions in a timely fashion in healthcare for pharmaceutical testing, users can simply input this information in natural language so the system “automatically maps what patient means, what prescription means, [and] where’s the prescription,” Lamba explained. With this approach, users can easily specify the different attributes for a common model without writing code. The system performs the actual mapping with machine learning techniques, which also underpin the natural language technologies necessary for understanding the terms used in business rules.

The Knowledge Graph Factor

Semantic knowledge graphs play a considerable role in facilitating the mapping required for multiple data sources to utilize a singular model. Once initially replicated to the cloud, datasets are aligned in a data lake (prior to any transformations) via a knowledge graph. According to Lamba, the relationship discernment capacity of these graphs is able to “detect the different entities, the relationships between the entities. Like, here’s some data about a patient, here’s data about a doctor, this patient went to this doctor.”

The knowledge graph methodology provides the framework for mapping data to a unified model by pinpointing how those data relate to one another. Business rules are necessary to denote how those data are used as entities and attributes in the target model. The graph is essential for the system’s capacity to comprehend the different concepts found in data via terms meaningful to business and data science objectives. For instance, “the knowledge graph contains all of the different synonyms of different things, like how can different things be worded in the world, and things like that,” Lamba commented.

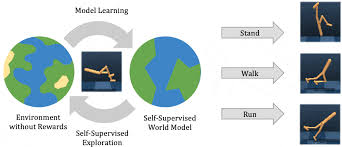

Machine Intelligence

Graph technologies also directly contribute to the machine learning techniques that cohere data to a single model, standardizing these various elements in the process. As such, this dimension of cognitive computing both supports a common data model by mapping data to it, and is in turned supported by that model, which is useful for preparing data for machine learning training datasets. Unsupervised learning approaches such as clustering algorithms—which are native to graph settings—are deployed to detect different data elements and populate the knowledge graph.

According to Lamba, user-assisted reinforcement learning contributes to mapping data to a unified model. “When a customer maps his sources to our common data model, there’s reinforcement learning,” Lamba disclosed. “Every time customers map a new model, our AI learns. So we learn if something goes wrong; the customer will tell us something went wrong, we’ll fix it. On an ongoing basis AI is learning, so there’s reinforcement learning built in.”

Speeding up Data Science

The use cases for the overall applicability of a common data model are countless and exist at the core of any significant data integration attempt. Nevertheless, the need to map a sundry of data types to get the range of vectors required for truly informed—and accurate—machine learning results will surely increase as this form of AI becomes widely used by organizations. This approach’s merit becomes tenfold to data science since data modeling (part of a larger data engineering process involving transformation, data cleansing, and other preparation work) has traditionally monopolized so much time of data scientists.

Automating data modeling functions as a SaaS solution not only accelerates time to value for these professionals, but also for the business units depending on advanced analytics to improve their job performance. “Usually it’s valuable to customers for running analytics use cases that need diverse data,” Lamba posited. “If you need diverse data, [this] is a great way because you get this out of the box common data model unified very quickly.”