The CDOE – Certified DataOps Engineer program is recognized as a vital professional standard for those operating at the intersection of data engineering and operational reliability. This guide is developed for engineers and technical leaders who are focused on synchronizing data workflows with agile delivery cycles. Within the contemporary ecosystem of platform engineering and cloud-native architecture, the requirement for automated, high-quality data pipelines is consistently highlighted. By following this analysis, informed decisions can be made regarding professional development and the strategic implementation of DataOps principles. DataOpsSchool provides the structured framework and specialized training required to master this domain and achieve certification.

What is the CDOE – Certified DataOps Engineer?

The CDOE – Certified DataOps Engineer is a professional designation that focuses on the lifecycle management of data through automated and collaborative methodologies. It is designed to bridge the gap between data science, data engineering, and IT operations, ensuring that data is delivered with both quality and speed. Unlike purely theoretical frameworks, a production-focused approach is prioritized, allowing practitioners to implement solutions that work in real-world scenarios. Modern engineering workflows are supported through the application of continuous integration and continuous delivery (CI/CD) to data pipelines. By aligning with enterprise practices, the certification ensures that data infrastructure is managed with the same rigor as application code.

Who Should Pursue CDOE – Certified DataOps Engineer?

A wide range of technical roles is found to benefit from the CDOE – Certified DataOps Engineer certification, including data engineers, SREs, and cloud architects. It is intended for software engineers who are transitioning into data-centric roles and for security professionals who must ensure data integrity within automated systems. Both beginners seeking a structured entry point and experienced professionals looking to formalize their expertise are accommodated. Engineering managers and technical leaders also find value in this certification to better oversee complex data initiatives across their organizations. From a global perspective, and specifically within the tech landscape of India, a growing demand for certified DataOps professionals is observed.

Why CDOE – Certified DataOps Engineer is Valuable and Beyond

The longevity of the CDOE – Certified DataOps Engineer is ensured by the persistent shift toward data-driven decision-making in large-scale enterprises. As organizations continue to scale their data operations, the adoption of DataOps is seen as a mechanical necessity rather than an optional trend. Professionals are helped to stay relevant by focusing on core principles that transcend individual tool changes or specific cloud service providers. A significant return on time and career investment is typically realized as specialized skills in data orchestration become a key differentiator in the job market. Enterprise adoption is expected to expand, making this certification a stable foundation for long-term career growth in infrastructure and data domains.

CDOE – Certified DataOps Engineer Certification Overview

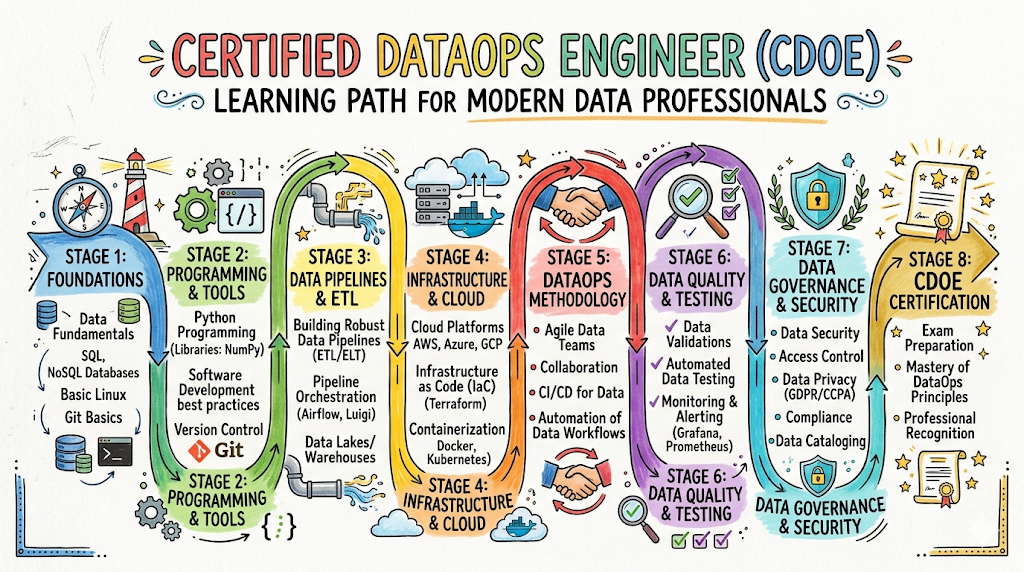

The certification program is delivered via the official platform and is hosted on the provider website. A multi-tiered assessment approach is utilized, moving from foundational knowledge to advanced architectural mastery of data systems. The structure is designed to provide practical ownership of the DataOps lifecycle, including aspects like data versioning, automated testing, and observability. Different certification levels are offered to match the varying degrees of expertise and responsibility found within the industry. It is structured to ensure that both technical competence and strategic understanding are validated through rigorous examination and project-based assessments.

CDOE – Certified DataOps Engineer Certification Tracks & Levels

Professional progression is facilitated through a structured hierarchy consisting of foundation, professional, and advanced levels. In the foundation stage, the core tenets of data automation and pipeline orchestration are introduced to the candidate. At the professional level, deep dives into specialized tracks such as DevOps integration and SRE for data platforms are conducted. The advanced level is reserved for those who architect complex, multi-cloud data ecosystems at an enterprise scale. Each level is carefully aligned with career progression milestones, ensuring that the skills acquired are immediately applicable to evolving job responsibilities in the field.

Complete CDOE – Certified DataOps Engineer Certification Table

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| Core DataOps | Foundation | Entry-level Engineers | Basic Programming | CI/CD for Data, Automation | 1 |

| Data Infrastructure | Professional | SREs & Data Engineers | Foundation Level | Orchestration, Monitoring | 2 |

| Security & Governance | Professional | Security Engineers | Foundation Level | Data Masking, Compliance | 3 |

| Enterprise Architecture | Advanced | Architects & Leaders | Professional Level | Scaling DataOps, Multi-cloud | 4 |

Detailed Guide for Each CDOE – Certified DataOps Engineer Certification

CDOE – Certified DataOps Engineer – Foundation Level

What it is

The fundamental principles of DataOps are validated at this level, ensuring a common vocabulary and a thorough understanding of data lifecycles. It serves as the essential entry point for all subsequent specializations within the data automation domain.

Who should take it

Suitable roles include junior data engineers, software developers, and IT associates who are new to the concept of data automation. No extensive prior experience in DataOps is required, making it an ideal starting point for those looking to pivot their careers.

Skills you’ll gain

- Understanding of the DataOps manifesto and its core pillars.

- Basic proficiency in version control for data assets and schemas.

- Knowledge of automated data testing frameworks and quality gates.

- Ability to identify bottlenecks in manual data delivery processes.

Real-world projects you should be able to do

- A basic CI/CD pipeline for a small-scale data set can be established.

- Automated data quality checks can be integrated into a local development workflow.

- Version control for SQL scripts and transformation logic is successfully implemented.

Preparation plan

- 7–14 days: The official study guide is reviewed, and core terminology and concepts are thoroughly memorized.

- 30 days: Practical labs involving Git and basic pipeline automation tools are completed in a sandbox environment.

- 60 days: Mock exams are taken to identify knowledge gaps, and foundational concepts are applied to a personal data project.

Common mistakes

- The importance of the cultural shift in DataOps is often overlooked in favor of focusing solely on the technical tools.

- Inadequate time is spent understanding the nuances of data quality versus traditional application software quality.

Best next certification after this

- Same-track option: CDOE Professional Level.

- Cross-track option: Certified SRE Practitioner.

- Leadership option: DataOps Strategy for Managers.

CDOE – Certified DataOps Engineer – Professional Level

What it is

Advanced implementation strategies and the management of complex, production-grade data environments are validated at the professional level. The focus is shifted toward achieving pipeline stability, scalability, and cross-functional team collaboration in an enterprise setting.

Who should take it

This level is intended for experienced data engineers, SREs, and platform architects who have a minimum of one year of experience in automated environments. It is designed for those responsible for maintaining high uptime and performance in data pipelines.

Skills you’ll gain

- Mastery of containerization and orchestration for complex data workloads.

- Advanced monitoring and observability techniques for data pipelines and drift.

- Implementation of “Data as Code” practices across various staging and production environments.

- Optimization of resource allocation and cost for cloud-based data processing.

Real-world projects you should be able to do

- A high-availability data orchestration platform is deployed and maintained for an organization.

- Real-time monitoring dashboards for data drift and pipeline latency are created and monitored.

- Automated rollbacks for failed data deployments are successfully configured and tested.

Preparation plan

- 7–14 days: Deep dives into orchestration tools and monitoring frameworks are conducted using official documentation.

- 30 days: Complex multi-stage pipelines with multiple dependencies are built and optimized in a lab environment.

- 60 days: Case studies on enterprise-scale DataOps failures and recoveries are analyzed to understand advanced troubleshooting.

Common mistakes

- The complexity of maintaining state in data pipelines is frequently underestimated during the design phase.

- Monitoring is often set up for the underlying infrastructure but ignored for the actual data quality and accuracy.

Best next certification after this

- Same-track option: CDOE Advanced Architect.

- Cross-track option: Certified DevSecOps Professional.

- Leadership option: Director of Platform Engineering.

CDOE – Certified DataOps Engineer – Advanced Level

What it is

The ability to design and govern enterprise-wide DataOps strategies is validated at the advanced level. It focuses on large-scale architectural patterns, global compliance at scale, and the long-term sustainability of the organization’s data infrastructure.

Who should take it

Senior architects, principal engineers, and technical directors who oversee multiple data and engineering teams should pursue this level. It is meant for those who define the technical roadmap and standards for an entire enterprise.

Skills you’ll gain

- Design of multi-tenant data platforms with strict security and isolation requirements.

- Strategic integration of AI/ML workflows into standard data operations.

- Advanced cost management and FinOps strategies for large-scale data estates.

- Governance and compliance automation in highly regulated industries like finance and healthcare.

Real-world projects you should be able to do

- A global data mesh architecture is designed and implemented across multiple cloud regions.

- Self-service data infrastructure for hundreds of internal users is established and governed.

- An enterprise-wide data governance and privacy policy is automated entirely via code.

Preparation plan

- 7–14 days: Advanced architectural whitepapers and global industry standards for data management are studied.

- 30 days: High-level design documents for complex, multi-cloud scenarios are drafted and peer-reviewed.

- 60 days: Mentorship sessions are held with industry leaders, and broad-scale strategy simulations are completed.

Common mistakes

- Solutions are sometimes over-engineered for a level of scale that is not yet required by the business.

- The human element of organizational change management and training is often neglected at this level.

Best next certification after this

- Same-track option: DataOps Fellow or Principal Researcher.

- Cross-track option: Cloud Solutions Architect Professional.

- Leadership option: Chief Technology Officer (CTO) track development.

Choose Your Learning Path

DevOps Path

The integration of data pipelines into standard software delivery cycles is the primary focus of this path. The alignment of data schema changes with application deployments is prioritized to prevent deployment failures. Tools typically used in traditional DevOps, such as CI servers and configuration management, are adapted for data contexts. This path is ideal for those who wish to unify the development and operations experience across all technical domains. It is recommended for engineers who want to bridge the gap between app dev and data.

DevSecOps Path

The security and integrity of data at rest and in transit is the central theme within this specialization. Automated compliance checks and vulnerability scanning are integrated directly into the data lifecycle. Sensitive data masking and granular access control are treated as code within the pipeline. This path is recommended for professionals who work in finance, healthcare, or any sector with strict regulatory requirements. It ensures that security is never an afterthought in the data delivery process.

SRE Path

The reliability and availability of data systems are investigated through the lens of Site Reliability Engineering. Error budgets for data pipelines and service level objectives (SLOs) for data freshness are established and monitored. Toil reduction through the automation of routine data tasks is a core objective of this track. This path is suitable for those responsible for the 24/7 uptime of mission-critical data platforms. It focuses on making data systems as resilient as the applications they support.

AIOps Path

Artificial intelligence is utilized to enhance and automate IT operations and data management. Pattern recognition is applied to system logs and data metrics to predict potential failures before they impact the business. Automated incident response and remediation are driven by machine learning models trained on operational data. This path is tailored for engineers who want to build self-healing data infrastructures and reduce MTTR. It represents the future of automated system administration.

MLOps Path

The operationalization of machine learning models is addressed in this distinct and specialized track. The focus is placed on the reproducibility of experiments and the continuous deployment of models into production. Feature store management and model monitoring for drift are central themes covered in the curriculum. This path is necessary for organizations looking to move AI and ML from the research lab to production reliably. It bridges the gap between data science and production engineering.

DataOps Path

The foundational journey into specialized data automation is represented by this comprehensive path. Lean manufacturing principles are applied to data flows to reduce cycle times and significantly improve data quality. Collaboration between data providers and data consumers is facilitated through automated contracts and interfaces. This path serves as the backbone for any modern, data-driven organization seeking to optimize its data utility. It is the primary track for those dedicated to the DataOps discipline.

FinOps Path

The economic efficiency and cost-effectiveness of data operations are analyzed in this specialized track. Cloud spend for data storage, processing, and transfer is monitored and optimized through automated policies. Accountability for costs is driven back to the engineering and data teams through transparent reporting. This path is essential for managing the high expenses often associated with large-scale cloud data estates. It ensures that data initiatives remain financially sustainable for the business.

Role → Recommended CDOE – Certified DataOps Engineer Certifications

| Role | Recommended Certifications |

| DevOps Engineer | CDOE Foundation + Professional (DevOps Track) |

| SRE | CDOE Professional (Infrastructure Track) + SRE Practitioner |

| Platform Engineer | CDOE Professional + Advanced Architect |

| Cloud Engineer | CDOE Foundation + Professional (Cloud Track) |

| Security Engineer | CDOE Professional (Security Track) |

| Data Engineer | CDOE Foundation + Professional + Advanced |

| FinOps Practitioner | CDOE Foundation + FinOps Specialist |

| Engineering Manager | CDOE Foundation + Leadership Track |

Next Certifications to Take After CDOE – Certified DataOps Engineer

Same Track Progression

Deep specialization is pursued by moving sequentially through the foundation, professional, and advanced levels of the CDOE program. Expertise is further refined by focusing on specific niche domains like real-time streaming DataOps or complex graph database operations. Continuous learning is maintained through attendance at advanced workshops and participating in masterclasses. This ensures that the professional remains at the cutting edge of data orchestration technology. Long-term mastery in this track often leads to principal or fellow-level roles within an organization.

Cross-Track Expansion

Skill broadening is achieved by branching into related technical disciplines such as DevSecOps or Site Reliability Engineering. By understanding how data pipelines interact with security protocols and reliability frameworks, a more holistic view of the system is gained. This approach is highly valued in collaborative, T-shaped professional environments where cross-functional knowledge is a requirement. Transitions between different engineering roles are made more seamless through this intentional cross-pollination of skills. It prevents the development of professional silos and enhances problem-solving capabilities.

Leadership & Management Track

The transition to leadership is facilitated by certifications that focus on technical strategy, team building, and ROI analysis for data projects. Engineering managers use their technical foundation to lead large-scale digital transformation initiatives across the enterprise. Mentorship of junior staff and the design of organizational structures become the primary focus areas of this track. This path is designed for those who wish to influence the technical and strategic direction of the business from a high-level perspective. It combines technical depth with the soft skills required for executive leadership.

Training & Certification Support Providers for CDOE – Certified DataOps Engineer

DevOpsSchool

DevOpsSchool is recognized as a premier institution for technical training, offering an extensive range of programs in DevOps, SRE, and DataOps. A hands-on, practical approach is consistently maintained, ensuring that all participants gain real experience with industry-standard tools. The curriculum is frequently updated to reflect the latest technological shifts and best practices in the global market. Extensive support is provided by a dedicated community of experienced instructors and successful alumni. Both individual professionals and large corporate teams are accommodated through various flexible learning formats. The commitment to quality and career outcomes has made it a top choice for engineers worldwide.

Cotocus

Cotocus provides highly specialized consulting and professional training services focused on modern infrastructure and cloud-native technologies. A strong focus on high-level architecture and practical implementation strategies is maintained throughout its diverse offerings. The instructors at Cotocus are typically active industry practitioners who bring real-world scenarios and challenges into the classroom. Deep technical knowledge is combined with strategic insights to help organizations successfully transform their delivery pipelines. Training programs are often tailored to meet the specific technical and business needs of enterprise clients. The reputation for delivering high-impact results has established Cotocus as a trusted partner in technical education.

Scmgalaxy

Scmgalaxy serves as a comprehensive knowledge hub and a leading training provider for Software Configuration Management and DevOps professionals. A vast repository of technical tutorials, articles, and active community forums is maintained to support continuous learning. The training programs are meticulously designed to cover the entire spectrum of the software delivery lifecycle. Practical application is prioritized, with numerous hands-on labs and real-world projects integrated into the coursework. Scmgalaxy has successfully fostered a large, global community of practitioners who regularly share insights and solve complex challenges. It remains an essential resource for mastering the intricacies of build and release engineering.

BestDevOps

BestDevOps is dedicated to providing premium educational resources and clear certification paths for both aspiring and established DevOps engineers. The focus is kept on delivering actionable and concise information that can be immediately applied in a professional engineering setting. A variety of learning materials, including detailed video courses and interactive labs, are offered to cater to different learning preferences. The platform is designed to help professionals stay updated with the rapidly evolving and complex technical landscape. Community engagement is encouraged through active forums and peer-to-peer support networks. BestDevOps continues to be a reliable and authoritative guide for those pursuing excellence.

Devsecopsschool.com

A specialized focus on the critical integration of security into the DevOps lifecycle is provided by devsecopsschool.com. The curriculum is designed to address the urgent need for automated security and compliance within modern delivery pipelines. Practical skills in vulnerability management, secure coding, and infrastructure security are taught in depth. The programs are ideal for security professionals moving into DevOps and for engineers looking to broaden their security expertise. A proactive approach to threat modeling and risk mitigation is emphasized in every module. The institution plays a critical role in bridging the historical gap between development and security teams.

Sreschool.com

The principles and advanced practices of Site Reliability Engineering are the central focus of the curriculum at sreschool.com. Training is provided on how to design, build, and maintain highly reliable and efficient distributed systems at scale. Concepts such as error budgets, toil reduction, and automated incident management are explored through practical exercises. The instructors bring extensive experience from some of the world’s largest production environments to the training programs. A careful balance between theoretical knowledge and practical implementation is maintained. SRESchool is a primary destination for engineers tasked with ensuring the stability of modern digital services.

Aiopsschool.com

AIOpsSchool is focused on the cutting-edge application of artificial intelligence and machine learning to modern IT operations. The training programs cover the strategic use of big data and ML models to automate monitoring and incident response. Participants are taught how to implement self-healing infrastructures and sophisticated predictive analytics. The curriculum is designed to help organizations transition toward more intelligent and proactive operational models. A consistent focus on data-driven decision-making and operational efficiency is maintained. AIOpsSchool serves as a vital resource for professionals entering the next frontier of automated, intelligent system operations.

Dataopsschool.com

The primary hosting and delivery platform for DataOps certifications is found at dataopsschool.com. A dedicated environment for mastering the data lifecycle through automated and collaborative methodologies is provided. The institution is focused exclusively on the unique challenges and opportunities found within the specialized DataOps domain. High-quality course materials, expert instruction, and practical labs are integrated to ensure student success. The platform supports the development of a global standard for data engineering and operational excellence. It remains the central authority for professionals seeking to validate their expertise as Certified DataOps Engineers.

Finopsschool.com

The intersection of cloud finance and engineering operations is addressed in detail by the programs at finopsschool.com. Training is provided on how to manage and optimize cloud costs across large and complex enterprise environments. The focus is placed on establishing accountability and driving maximum value from all cloud investments. Practical strategies for cost allocation, forecasting, and automated optimization are taught by experts. The programs are designed for finance professionals, engineering managers, and cloud architects alike. FinOpsSchool helps organizations achieve the financial transparency and operational efficiency required in their cloud journeys.

Frequently Asked Questions (General)

- What is the general difficulty level of the CDOE – Certified DataOps Engineer exam?

The exam is considered moderately difficult, as it requires both a solid understanding of theoretical concepts and practical experience with automation tools. - How much time is typically required to prepare for the foundation level?

For most professionals, a dedicated period of four to six weeks is sufficient to master the material and pass the assessment. - Are there any mandatory prerequisites before taking the professional level exam?

Yes, successful completion of the foundation level certification is generally required before moving to any of the professional tracks. - What is the typical return on investment for this certification?

Professionals often report increased salary potential and access to more senior roles in data engineering and platform architecture. - How long does the certification remain valid?

The certification is usually valid for a period of two to three years, after which renewal or a higher-level certification is required. - Is the exam conducted in an online proctored environment?

Yes, the assessment is typically delivered through a secure, online proctored platform for global accessibility and convenience. - What programming languages are most useful for a DataOps engineer?

Python and SQL are the primary languages utilized, while knowledge of Bash and Go can also be highly beneficial in automation. - Can the certification be pursued by those without a formal computer science degree?

Yes, practical experience and a strong grasp of the certification material are prioritized over formal academic credentials in this field. - Are training materials included in the certification fee?

This depends on the package selected, but many enrollment options include access to official study guides and interactive practice labs. - How does DataOps differ from traditional Data Engineering?

DataOps emphasizes automation, collaboration, and the continuous delivery of data, whereas traditional methods are often more siloed and manual. - Is this certification recognized by major cloud providers?

The principles taught are cloud-agnostic and are recognized as industry best practices by major providers like AWS, Azure, and Google Cloud. - Are there group discounts available for corporate training?

Yes, most providers offer tailored pricing and support for organizations looking to certify their entire engineering and data teams.

FAQs on CDOE – Certified DataOps Engineer

- How is the CDOE certification specifically tailored for the needs of modern enterprises?

The CDOE certification is developed with a focus on real-world production environments where data reliability and speed are paramount. It addresses the friction between data teams and operations by introducing shared methodologies. Enterprises benefit from a standardized approach to data quality and infrastructure management. This ensures that data pipelines are resilient to changes and can scale alongside the growing business needs. - What role does automation play in the CDOE curriculum and the resulting career path?

Automation is the core pillar of the CDOE program. It is applied to data testing, deployment, and monitoring to eliminate human error. In a career context, this allows engineers to move away from repetitive manual tasks and toward high-value architectural work. A certified professional is equipped to build self-service platforms that empower other teams. This shift significantly increases the engineer’s overall impact within the organization. - How does the certification help in managing data quality at scale?

The certification introduces automated testing frameworks that are integrated directly into the data pipeline. This allows for early detection of data drift and quality issues before they reach downstream consumers. By treating “Data as Code,” teams can apply version control and peer review to data transformations. This rigorous approach ensures that data remains accurate and trustworthy even as the volume of data increases. - Is the CDOE certification suitable for professionals working in non-tech industries?

Yes, the principles of DataOps are highly applicable to industries like retail, finance, and manufacturing that rely on data-driven insights. Any organization that manages data pipelines can benefit from the automation and reliability practices taught in the program. The certification provides a universal framework that can be adapted to various vertical-specific requirements and compliance standards. It helps non-tech companies build tech-level data efficiency. - What are the primary tools covered in the DataOps certification journey?

The journey covers a broad range of tools including version control systems like Git and orchestration platforms like Airflow or Prefect. Containerization tools such as Docker and Kubernetes are also explored for managing data workloads. Monitoring and observability tools for data quality are integrated into the practical labs. The focus remains on the principles of using these tools effectively within a DataOps framework. - How does the CDOE program address the challenge of data security and privacy?

Security is integrated into the DataOps lifecycle through the DevSecOps track and foundational security modules. Techniques such as automated data masking and encrypted data transfer are taught as standard practices. Compliance audits are facilitated through the detailed logging and versioning of all data changes. This ensures that privacy and security requirements are met without slowing down the delivery of data. - What is the significance of the collaboration aspect emphasized in the certification?

DataOps is as much about people and culture as it is about technology. The certification provides strategies for breaking down silos between data scientists, engineers, and operations teams. Common goals and shared metrics are established to align the efforts of these different groups. This collaborative environment leads to faster problem-solving and a more cohesive data strategy for the entire organization. - How does this certification prepare engineers for the future of cloud-native data?

The CDOE program focuses on cloud-agnostic patterns that are essential for modern, multi-cloud data strategies. Engineers learn how to leverage cloud-native services for scaling and managing data infrastructure efficiently. Concepts like serverless data processing and managed orchestration are explored in detail. This ensures that professionals are prepared to lead their organizations through the complexities of cloud-native data evolution.

Final Thoughts

The decision to pursue the CDOE – Certified DataOps Engineer certification should be based on a clear assessment of one’s career goals and the evolving needs of the technical industry. It is observed that the transition to automated data lifecycles is not a temporary trend but a fundamental shift in how technology and information are managed. For an engineer, the acquisition of these skills represents a strategic move toward resolving the most critical bottleneck in modern enterprises: the data pipeline. The certification provides a structured and validated path to mastering these complex systems. While it requires a significant commitment of time and effort, the resulting expertise is highly portable and resilient to market fluctuations. It is recommended that professionals who are serious about long-term growth in the data and operations domain consider this certification as a core component of their professional development plan.